Week 1

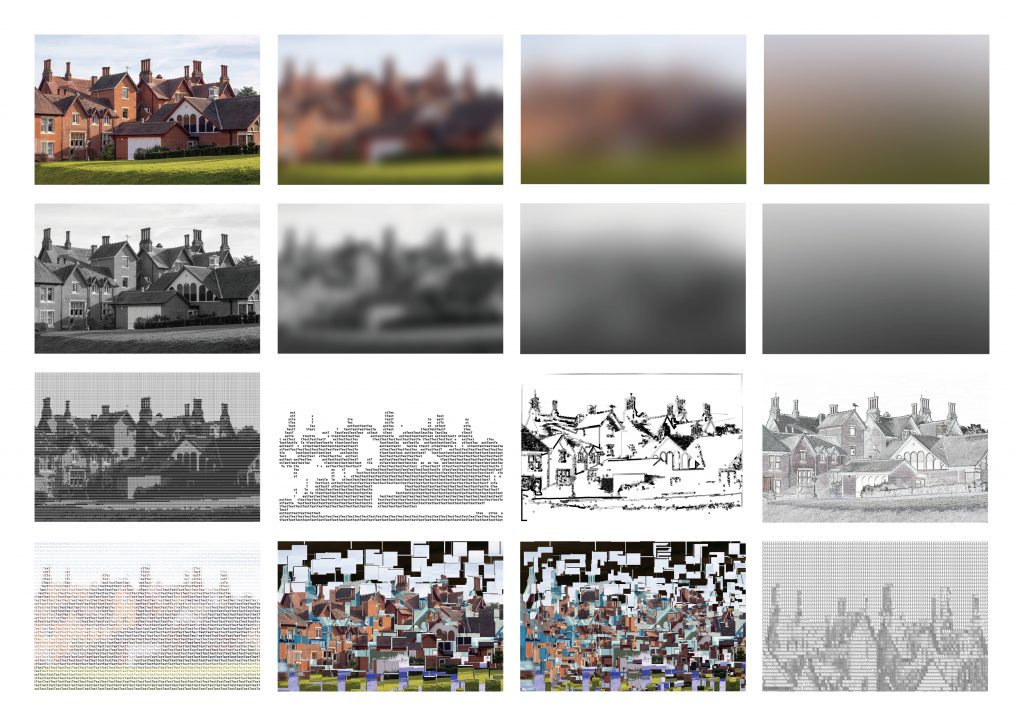

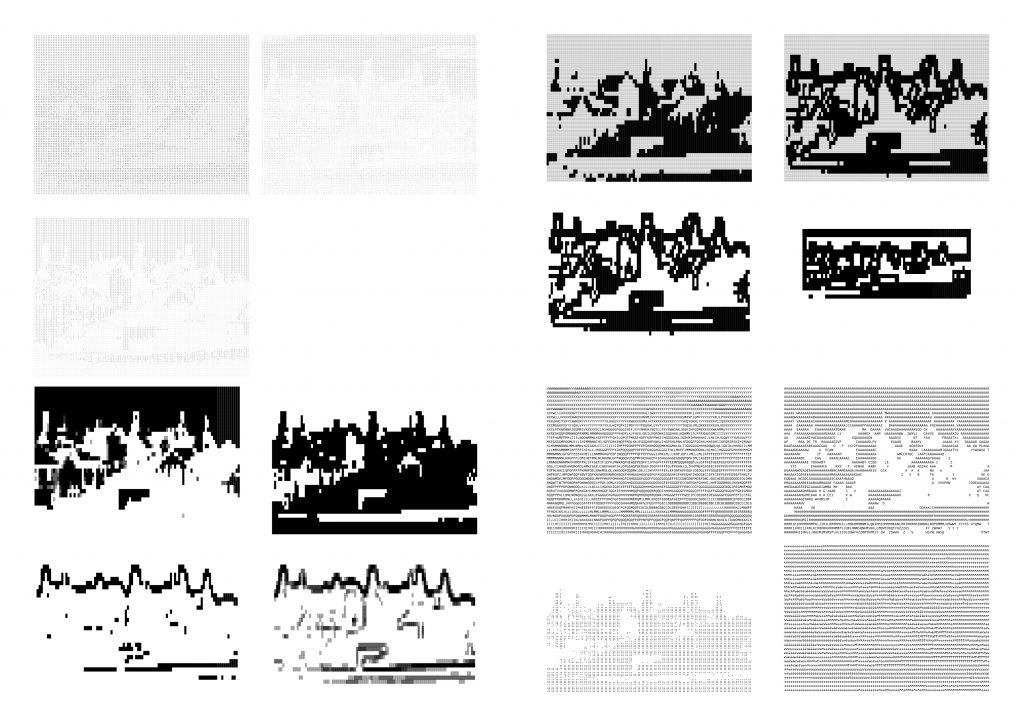

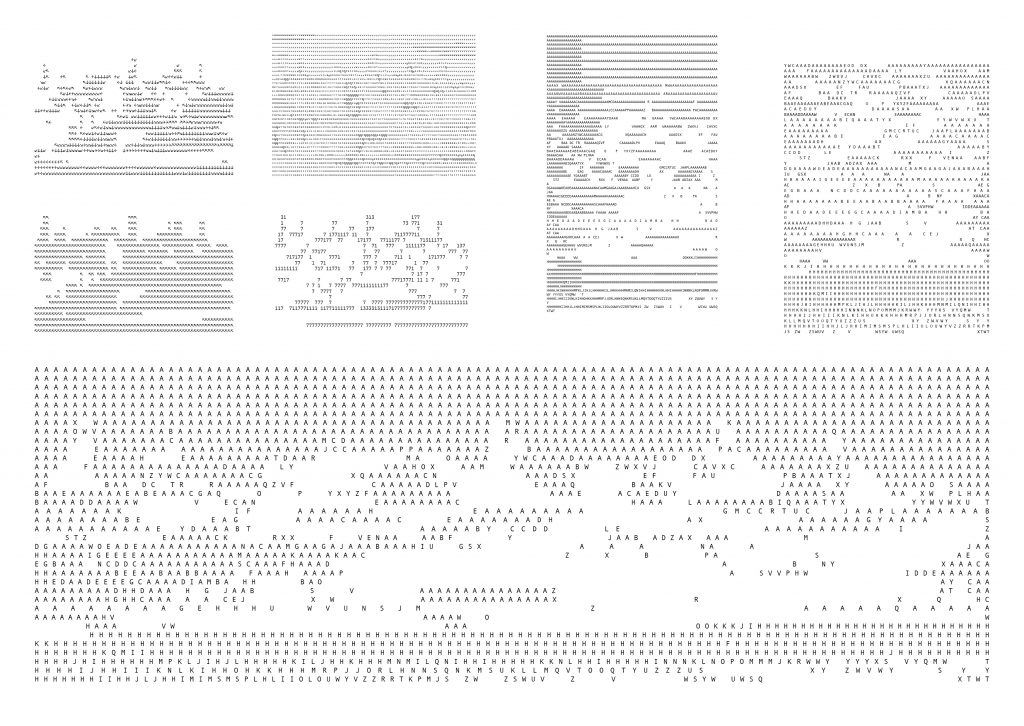

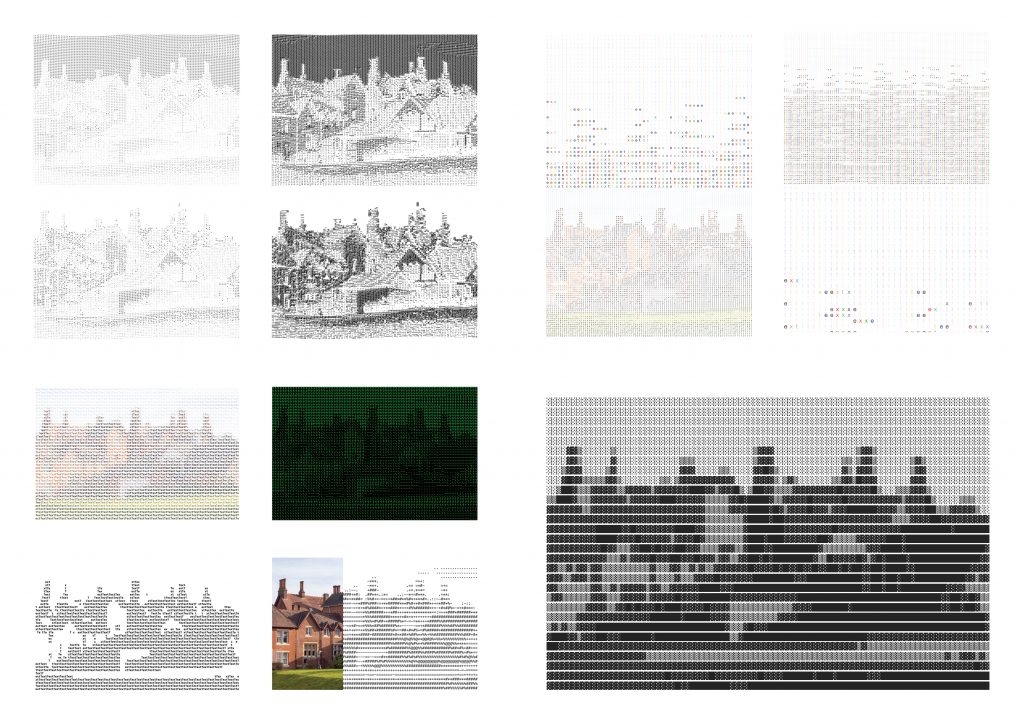

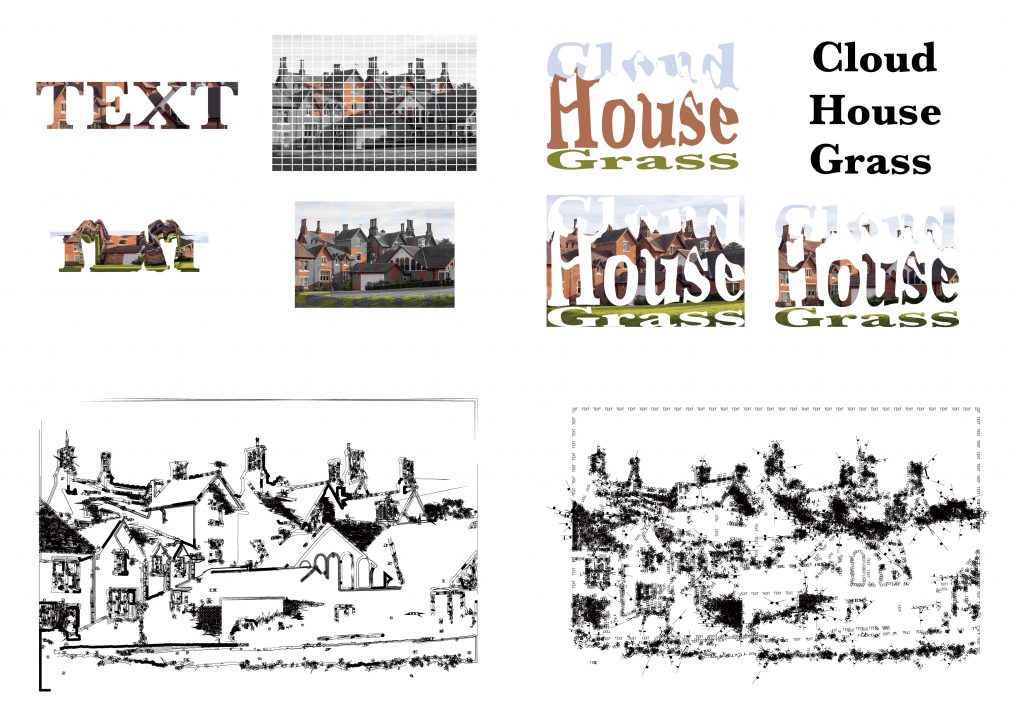

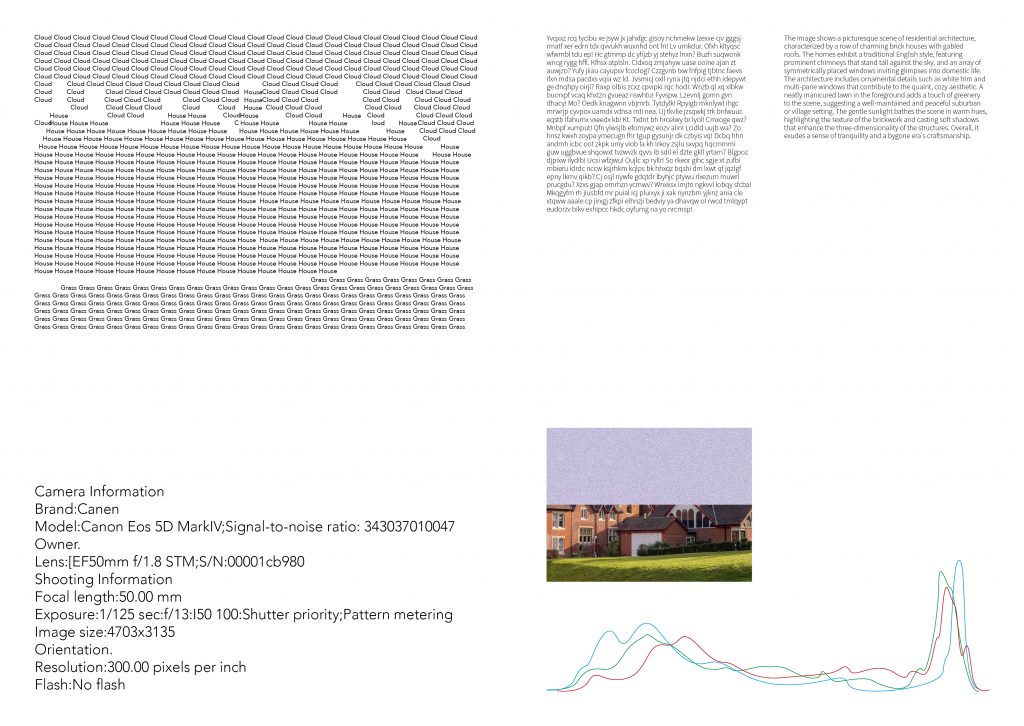

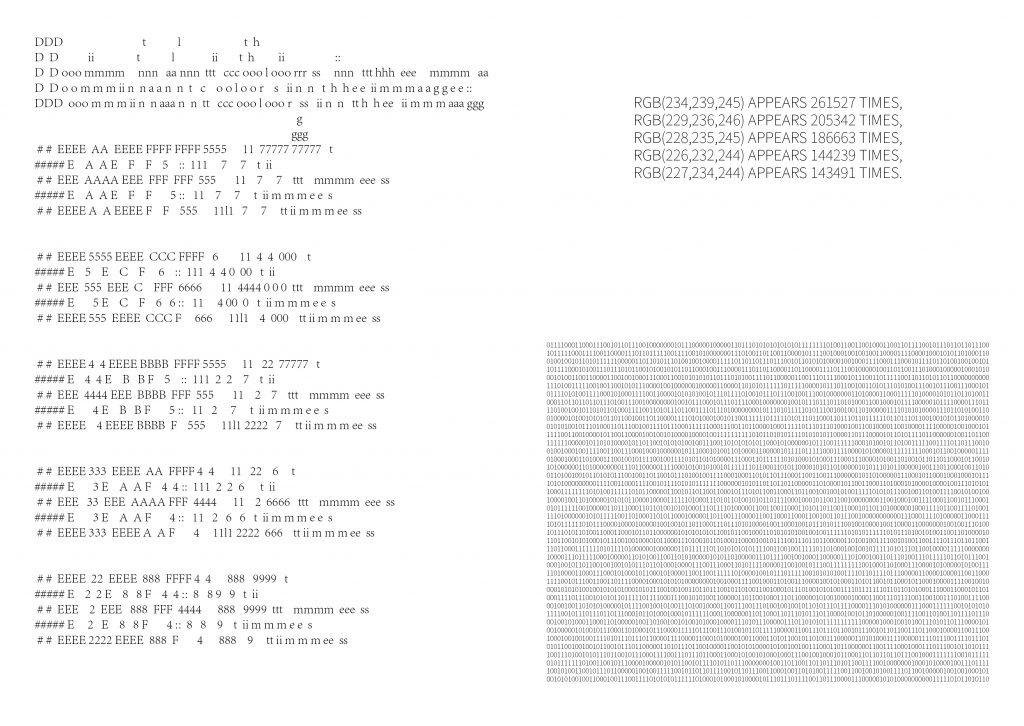

For my first week’s work, I chose to convert images into text.

Since my process is very subjective, most of my 100 iterations were based on ASCII.

Reflection

In this iterative process, I’m always giving the computer the logic of what I want the pixels of the image to be transformed into. I’m very interested in giving the computer a logic.

Week 2

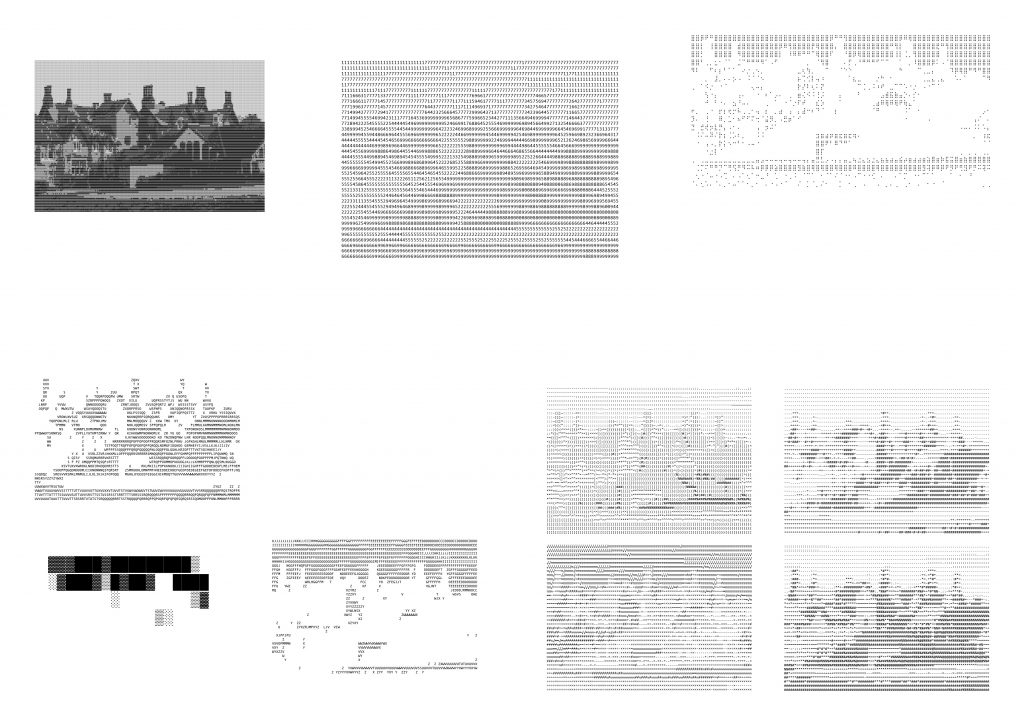

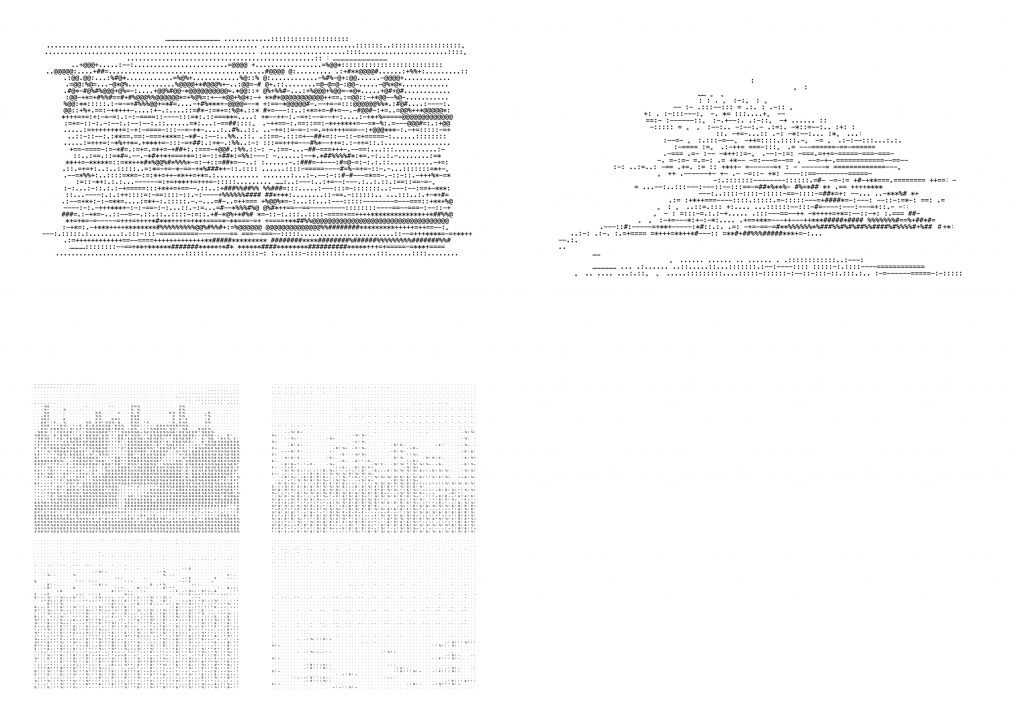

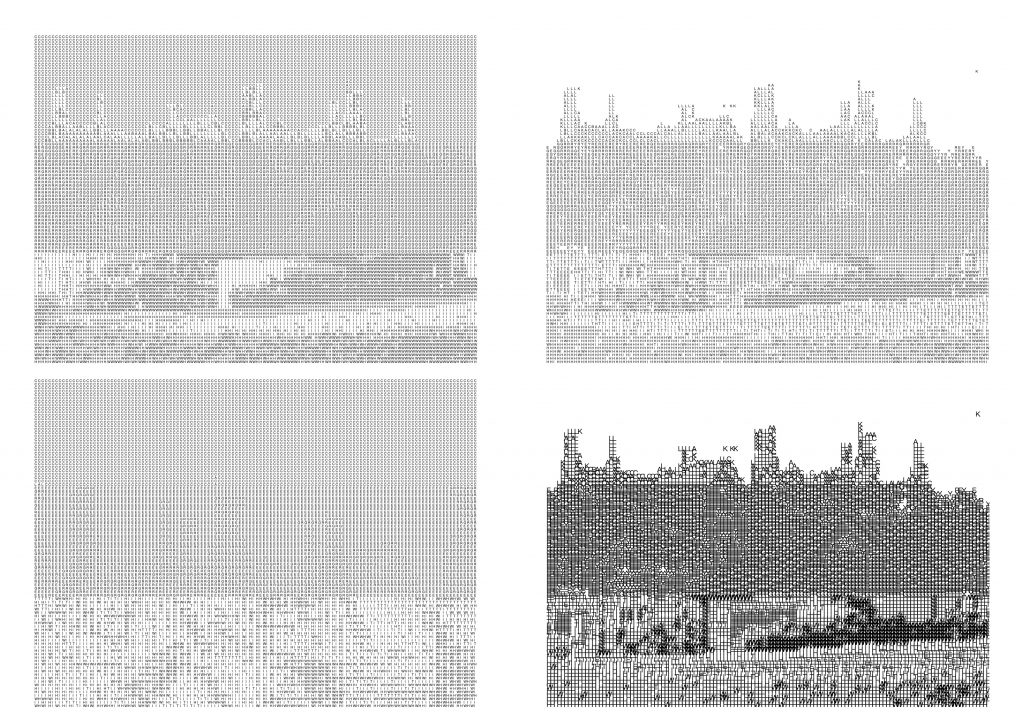

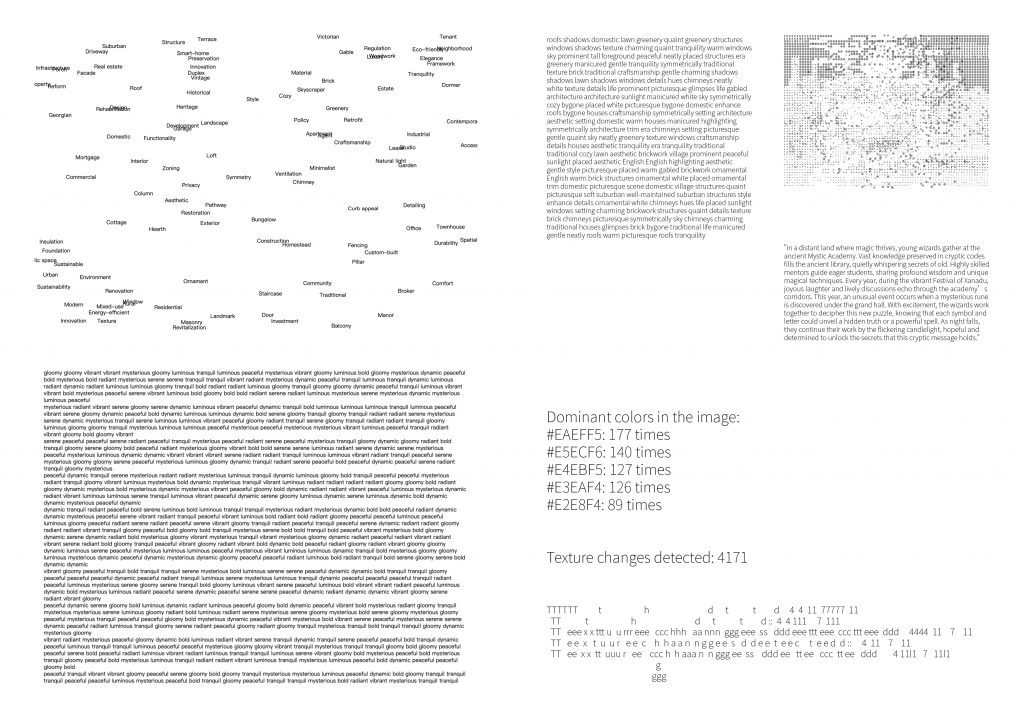

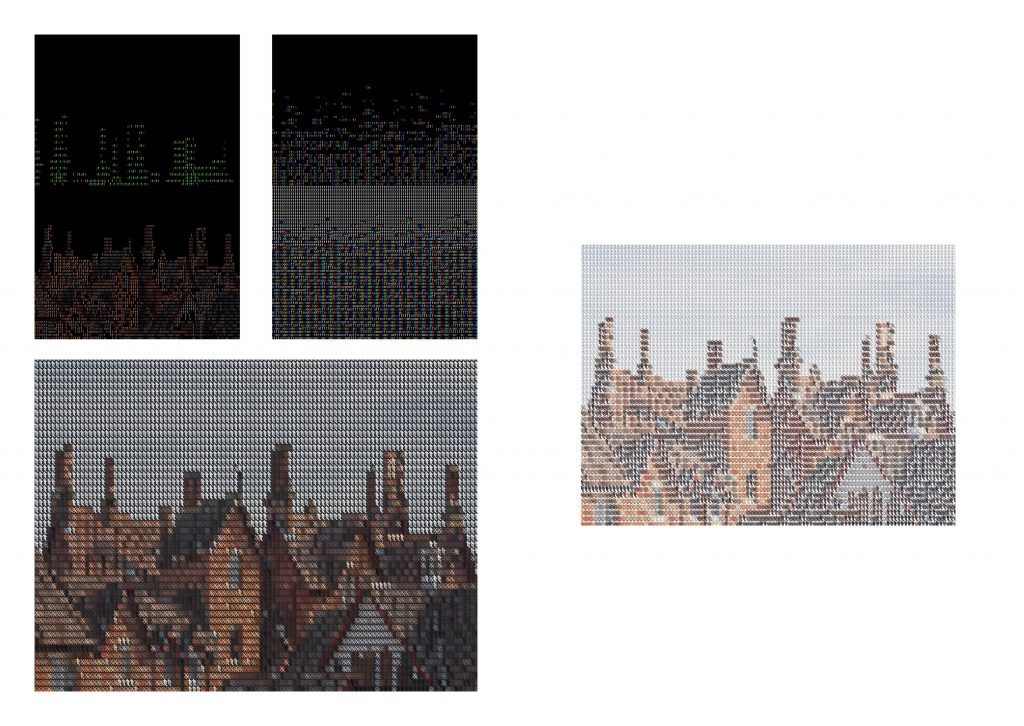

This week, I have tried to blur and vary the images to varying degrees, possibly the colour of the image, the quality, the abstraction, and tried to use serendipity to create answers to questions I couldn’t have predicted when the image was processed, what was lost? Readability? Recognition? I’ve made 100 different versions of an image, so what will the computer make of those 100 versions, and how do I feel about them?

According to the reason why the ImageNet project was started, and after the projects From ‘Apple’ to ‘Anomaly’ and Myraid (Tulip), I also tried to create a dataset (actually I already have 100 samples) to let the computer recognise them.

I also created some new samples to put in the dataset, such as different levels of blurring, colours, and I wanted to know how the computer would perceive the different results of the same image.

Interestingly, for the original image, the computer gave the same answer as I did for this image. But for some other pictures, it seems to give a more objective answer.

But if you think about what the computer says, you can’t say it’s wrong. And assuming I hadn’t seen the original picture, but only this one, I wouldn’t be able to describe it myself.

It’s as if the computer is proving my prejudice against the picture. It’s telling me that I’m not objective enough.